TL;DR — the direct answer. On August 2, 2026, the EU AI Act (Regulation EU 2024/1689) comes into force for high-risk AI systems — and Annex III point 4 explicitly lists AI systems used in recruitment (CV screening, interview scoring, candidate assessment). Combined with GDPR Article 22 (in force since 2018), in 2026 you have 10 concrete rights when a decision is made or influenced by AI: information, explanation, human oversight, data access, contestation. In the US, NYC Local Law 144 (bias audits for AEDTs), Illinois AIVLA (video interview transparency), California CPRA (automated decision-making opt-outs) and Colorado AI Act 2024 fill equivalent gaps. The cases Amazon 2018 (sexist screening tool withdrawn), HireVue 2021 (facial analysis scrapped after FTC complaint), Mobley v. Workday 2024 (1.1M rejections under class action) and the CNIL 2023 fine (€105k) show that the law works — provided you know your rights and exercise them. Template letter, EU DPA / equality body / labor court paths, and US equivalents below.

In 2026, 50 to 70% of applications in the EU and US pass through an automated system at some stage (ATS with scoring, AI CV screening, asynchronous video interview analyzed by AI). A large share of candidates don't know it, aren't informed, and don't know their recourse options.

This article is a practical guide: your concrete rights, how to verify whether an AI evaluated you, how to exercise your rights in writing, and where to go if the employer responds poorly.

The landscape: AI in 2026 hiring

The European and US figures for 2026 show the scale of the phenomenon — and the gap between deployment and candidate information.

The headline: 65% of large employers use AI in hiring, but only 11% of candidates are informed. That's exactly the information gap that the AI Act and GDPR aim to close — provided you exercise your rights.

What the AI Act concretely changes on August 2, 2026

The AI Act (Regulation EU 2024/1689, adopted May 2024) classifies AI systems into 4 risk levels: unacceptable risk (banned), high-risk (strict obligations), limited risk (transparency), minimal risk (unrestricted). Recruitment AI systems clearly fall into the "high-risk" category.

Systems covered (Annex III point 4)

Annex III, point 4, lists precisely:

- 4(a) AI systems intended for recruitment or selection of natural persons, notably for analyzing and filtering applications, evaluating candidates (including automated tests, video analyses, asynchronous AI-scored interviews)

- 4(b) AI systems intended to make or influence decisions affecting the terms of the work relationship, promotion, termination, task allocation based on behavior, performance or individual traits

Concrete examples covered:

- ATS modules that score your CV (Workday, Greenhouse, iCIMS, SmartRecruiters when they activate AI scoring features)

- Asynchronous video interview tools that score your expression, tone of voice, micro-expressions (HireVue, Spark Hire, Willo)

- AI-powered psychometric tests (Pymetrics, Arctic Shores, AssessFirst)

- Pre-qualification chatbots (Paradox, Mya) that decide whether you proceed to the next stage

- Generative AI models used to summarize or evaluate your application

Obligations imposed on employers from August 2, 2026

1. Full technical documentation (Articles 11-12): both the system provider and the deploying employer must document how the system works, training data, identified biases, mitigation measures.

2. Effective human oversight (Article 14): a decision concerning you cannot be fully automated. A human must have the capacity — not just theoretical — to modify the decision.

3. Non-discrimination and bias audits (Article 10): training data must be evaluated for bias, and measures must be taken to avoid direct and indirect discrimination.

4. Transparency to the candidate (Article 26(7)): you must be informed that you're interacting with a high-risk AI system and understand what it does.

5. Incident reporting: any significant failure must be reported to the national authority (CNIL in France, BfDI in Germany, etc.).

6. EU registration: high-risk systems deployed in the EU must be registered in a public database (you'll be able to verify Workday / HireVue / etc. compliance).

What this gives you, concretely

Before August 2, 2026, you could already demand much via GDPR. After August 2, 2026, you additionally gain:

- The right to be specifically informed that you're being processed by a high-risk AI

- The right to demand documented human oversight (not just "a human looked at it")

- The right to demand the bias audit documentation for the system

- Recourse via a dedicated sector authority (in France, the CNIL has been designated as the supervisory authority for high-risk AI systems in recruitment)

US counterpart: a patchwork, not a federal law

The US has no equivalent to the AI Act at the federal level, but several state and city laws create comparable rights:

- NYC Local Law 144 (in force July 2023): employers using an AEDT (Automated Employment Decision Tool) on NYC-resident candidates must conduct an annual bias audit, publish the results, and notify candidates at least 10 business days before use.

- Illinois AIVLA — Artificial Intelligence Video Interview Act (in force 2020, expanded 2022): transparency, consent, deletion on request, annual demographic reporting.

- California CPRA (in force 2023, ADMT regulations expected 2026): right to opt out of automated decision-making, right to access, right to know the logic.

- Colorado AI Act (SB 24-205, most provisions effective February 1, 2026): obligations close to those of the EU AI Act — risk management, impact assessments, consumer notice.

- Federal EEOC guidance (May 2023, updated 2025): AI tools must comply with Title VII, ADA, ADEA. Vendor liability + employer liability.

If you apply to a US company with offices in the EU, or to an EU subsidiary of a US company, the AI Act applies to that entity. For fully US positions, rely on the relevant state law + EEOC guidance.

Your 10 concrete rights in 2026 (GDPR + AI Act combined)

You're not gaining 10 new rights on August 2 — many existed under GDPR. But the AI Act reinforces their application in the specific context of AI recruitment. Here's the operational grid.

Right 1 — To be informed (GDPR Articles 13-14 + AI Act Article 26(7))

The employer must inform you, before collecting your data, of: the identity of the data controller, the purposes, legal bases, retention period, existence of automated processing, and from August 2, 2026: the use of a high-risk AI system. In practice, this is the "privacy policy" / "candidate notice" you should find on the job ad or career site.

Right 2 — To access your data (GDPR Article 15)

You can request in writing a copy of all data the employer holds about you (CV, interview notes, automated scores, internal feedback, final decision and its motive). Response is due within one month (GDPR Article 12). Free.

Right 3 — To rectify your data (GDPR Article 16)

If the AI or ATS parsed your CV incorrectly (wrong experience date, misidentified degree, missed skill), you can demand rectification. Particularly useful when AI scoring was based on erroneous data.

Right 4 — Not to be subject to a fully automated decision (GDPR Article 22)

Except in specific cases (explicit consent, contractual necessity, legal authorization), a decision affecting you cannot be made solely by an AI. A rejection based exclusively on AI scoring without human review is contestable.

Right 5 — To obtain human intervention (GDPR Article 22(3))

You can ask in writing for a human to re-examine your file. The employer must document this review — it cannot be merely formal.

Right 6 — To obtain an explanation of the decision (GDPR Article 22(3) + AI Act Article 86)

AI Act Article 86 (applicable from August 2026) creates an explicit right to "a clear and meaningful explanation" of the decision for high-risk systems. Not "your profile didn't match" — but which criteria, what weight, which human oversight.

Right 7 — To contest the decision (GDPR Article 22(3))

You can express your point of view and contest the decision — first in a complaint letter to the company, then via authorities if the response is unsatisfactory.

Right 8 — To erasure (GDPR Article 17, "right to be forgotten")

Once the process is over (with or without hiring), you can request deletion of your data within legal limits (the employer may keep some records to defend against litigation, but the duration is bounded — CNIL recommendation: 2 years max for a non-hired candidate).

Right 9 — To object to profiling (GDPR Article 21)

You can object to the automated processing of your data for profiling purposes (scoring, segmentation, classification). The employer must demonstrate a compelling legitimate reason to continue despite the objection.

Right 10 — To file a complaint (GDPR Article 77 + AI Act)

Your national Data Protection Authority for GDPR and AI Act, equality body for discrimination, labor court if damages are demonstrable. Details below.

4 cases that made jurisprudence (2018-2026)

Case 1 — Amazon 2018: the sexist AI withdrawn

Amazon had developed an internal CV screening tool for tech roles from 2014. Trained on 10 years of mostly male CVs, the tool learned to penalize CVs mentioning "women's chess club" or women's colleges. Amazon tried to correct the bias, failed, and officially withdrew the tool in 2018 (Reuters). The case was a global wake-up call on the risk of bias in recruitment AI.

Case 2 — HireVue 2021: FTC complaint and end of facial analysis

HireVue, an asynchronous video interview platform used by over 700 large employers (Hilton, Unilever, Goldman Sachs), scored candidates on facial micro-expressions, tone of voice, word choice. After a complaint by the Electronic Privacy Information Center (EPIC) to the FTC and several academic studies (notably from MIT) pointing to discriminatory biases against minorities, HireVue dropped facial analysis in January 2021 (Wall Street Journal). The platform continues to exist on a restricted basis.

Case 3 — Workday 2024-2026: the 1.1 million applications class action

Derek Mobley, an African-American candidate over 40, sued Workday in 2023 after more than 100 job rejections during a period when he entered "application apartheid" through the Workday ATS. The Northern District of California certified the collective action in May 2025, expanding it to over 1.1 million applications potentially discriminated (age, race, disability). The case is still ongoing in 2026 and is the first large-scale test of ATS vendor liability for algorithmic bias. Coverage: Reuters.

Case 4 — CNIL 2023: French recruiter fined for illegal automated processing

The CNIL (French Data Protection Authority) issued a €105,000 fine in 2023 against a French recruitment firm that used an automated scoring tool without informing candidates and without human review of rejections. The decision clearly established that: (1) ex-ante information is mandatory, (2) a human must review rejections, (3) the candidate must be able to request explanation and rectification. It's the most important EU case on this topic and a template for enforcement under the AI Act.

How to tell if you were processed by AI

Not easy, because the information is often not given upfront. 4 signals to watch for:

Signal 1 — The speed of the rejection

If you applied at 2am and received a rejection at 2:02am, an automated system made the decision (or a pre-filter AI). A human wouldn't have been at work. From August 2, 2026, this case must be explicitly disclosed.

Signal 2 — Asynchronous interviews with analysis

If you're asked to record video answers without a human on the other end (HireVue, Spark Hire, Willo-type platform), there's a strong likelihood the analysis is AI-based — at least partially. You have the right to ask which platform is used and what analysis is performed.

Signal 3 — Gamified psychometric tests

Pymetrics games, AssessFirst tests, Arctic Shores assessments — all rely on algorithmic analysis. Often legitimate, but you must be informed and have access to your results.

Signal 4 — The employer's privacy policy

Read the "candidate notice" or "applicant privacy policy" page on the company's career site. Serious employers in 2026 explicitly mention their AI tools and GDPR/AI Act compliance. Absence of this information = red flag on the compliance level.

Signal 5 (US only) — The NYC Local Law 144 notice

For a role based in New York City, the employer must notify you at least 10 business days before using an AEDT. The notice must list: the source of data, the type of data, the types of decisions the AEDT will make. If you don't receive this notice, that's grounds for a complaint.

The template letter to exercise your rights (GDPR + AI Act)

Adapt this model. Send it by email or registered mail with acknowledgment to the DPO (Data Protection Officer) of the EU-based company (mandatory in all companies with more than 250 employees, findable in the privacy policy). For US positions, replace GDPR/AI Act references with CPRA/NYC 144/state law references.

Subject: Exercise of my rights as a candidate — Ref. application [job title] dated [date]

Dear Data Protection Officer,

As a candidate for your [job title] position submitted on [date], I wish to exercise the rights granted to me by the General Data Protection Regulation (GDPR, Regulation EU 2016/679) and the European AI Act (Regulation EU 2024/1689, applicable to high-risk systems since August 2, 2026).

I hereby request:

-

GDPR Article 15 — Right of access: a copy of all data you hold concerning me, including scores generated by any automated system, interview notes, internal evaluations, and the final decision with its rationale.

-

GDPR Articles 13-14 + AI Act Article 26(7): explicit confirmation of the AI systems used in processing my application (CV screening, scoring, video analysis, psychometric tests), their purpose, their criteria, and the human oversight in place.

-

GDPR Article 22 — Automated decision: information on the existence of automated processing that had an effect on my application, and if applicable, effective human intervention to re-examine my file.

-

AI Act Article 86: a clear and meaningful explanation of the criteria that led to the decision concerning me, if a high-risk AI system was used.

-

GDPR Article 16 — Right to rectification: if applicable, correction of any data concerning me that would be inaccurate or incomplete.

Please respond within the one-month deadline provided by GDPR Article 12. Failing this, I reserve the right to lodge a complaint with the competent Data Protection Authority in accordance with GDPR Article 77.

Sincerely,

[First Name Last Name] [Address] [Email] [Date]

Subject: Consumer Rights Request — Employment Application Ref. [job title] dated [date]

Dear Privacy Officer,

Pursuant to the California Privacy Rights Act (CPRA), [applicable state law, e.g., NYC Local Law 144 for NYC roles], and EEOC guidance on the use of automated systems in employment decisions, I hereby exercise the following rights as an applicant to your [job title] position submitted on [date]:

-

Right to Know (CPRA §1798.110): the categories and specific pieces of personal information collected about me, the purposes, and whether they were shared with third parties including AI vendors.

-

Right to Access (CPRA §1798.115): a copy of all personal information you hold about me, including any scores, rankings, or assessments generated by an Automated Employment Decision Tool (AEDT) or other algorithmic system.

-

Right to Opt-Out of Automated Decision-Making (CPRA §1798.185 and upcoming ADMT regulations): I request to opt out of any solely automated processing that produced legal or similarly significant effects on my application, and I request meaningful human review.

-

NYC Local Law 144 disclosure (if applicable): confirmation of whether an AEDT was used, the vendor, the latest bias audit results, and the data sources used.

-

Right to Correct (CPRA §1798.106): correction of any inaccurate personal information used in the decision.

Please respond within the statutory deadline (45 days under CPRA). Failing this, I reserve the right to file a complaint with the California Attorney General, the California Privacy Protection Agency, the NYC Department of Consumer and Worker Protection, and/or the EEOC.

Sincerely,

[First Name Last Name] [Address] [Email] [Date]

In practice, 30 to 50% of companies respond seriously to this letter (CFE-CGC survey March 2026, comparable rates in US CPRA enforcement reports). The others respond evasively or not at all — that's when you escalate to the recourse options.

The recourse paths — EU and US

Choose based on the type of grievance.

- ✓GDPR or AI Act violation (no information, automated decision without oversight, refusal to grant access) → National Data Protection Authority (CNIL, BfDI, AEPD, Garante, DPC, etc.)

- ✓Documented discrimination on a protected characteristic (race, gender, age, disability, religion, sexual orientation) → Equality body (Défenseur des droits in France, EHRC in UK, Equinet members)

- ✓Concrete and demonstrated material or moral damage (loss of opportunity after advanced talks) → Labor court

- ✓For each path, gather upfront: the ad, written correspondence, DPO responses, tests taken, dates. The written record is your only reliable ally in 2026.

- ✗CPRA or state privacy violation (no opt-out, no access, AEDT misuse) → California AG, CPPA (California), or relevant state AG

- ✗NYC AEDT violation (no bias audit, no 10-day notice) → NYC Department of Consumer and Worker Protection ($500-$1,500 per violation)

- ✗Discrimination under Title VII / ADA / ADEA → EEOC (federal, 180-300 day window) or state human rights commission

- ✗Actual damages from job loss / rescinded offer → federal or state court (typically requires plaintiff-side employment attorney)

EU — Filing with your national Data Protection Authority (free, online)

Each EU member state has its own DPA. In France: cnil.fr. In Germany: BfDI. In Ireland (key for big tech): DPC. In Spain: AEPD. Attach: the job ad, your CV, correspondence with the company, your rights-exercise letter, and any non-response. Average processing time in 2026: 4 to 6 months.

EU — Filing with an equality body (free)

If you suspect discrimination (gender, race, age, disability, religion, sexual orientation), file with your national equality body — Défenseur des droits in France (defenseurdesdroits.fr), EHRC in the UK, or a member of Equinet. They can investigate, make recommendations to the employer, and bring cases to court themselves.

EU — Labor court (rarer, heavier)

Only possible if you can demonstrate concrete material or moral damage. Rare for a simple application rejection, more relevant when a written offer is rescinded or a firm offer is withdrawn without legitimate reason. Typically requires a lawyer (€1,500 to €3,500, sometimes covered by legal insurance or legal aid).

US — EEOC (free, online)

For discrimination claims under Title VII (race, color, religion, sex, national origin), ADA (disability), or ADEA (age 40+). File at eeoc.gov. Deadline: 180 days from the discriminatory act (300 days in states with equivalent state laws). The EEOC investigates, attempts conciliation, and can sue or issue a "right-to-sue letter" allowing you to file in federal court.

US — State attorney general / CPPA (California)

For CPRA violations, file with the California Attorney General or the California Privacy Protection Agency (CPPA). For Colorado AI Act violations, the state AG. For Illinois AIVLA, the state AG. Penalties can include statutory damages ($100-$750 per consumer per incident under CPRA for certain violations) and injunctive relief.

US — NYC DCWP

For Local Law 144 violations on NYC-based roles: nyc.gov/dcwp. Penalties: $500 for first violation, $1,500 for subsequent violations — per job posting, per day. Small amounts per violation, but the class-action mechanism can multiply fast.

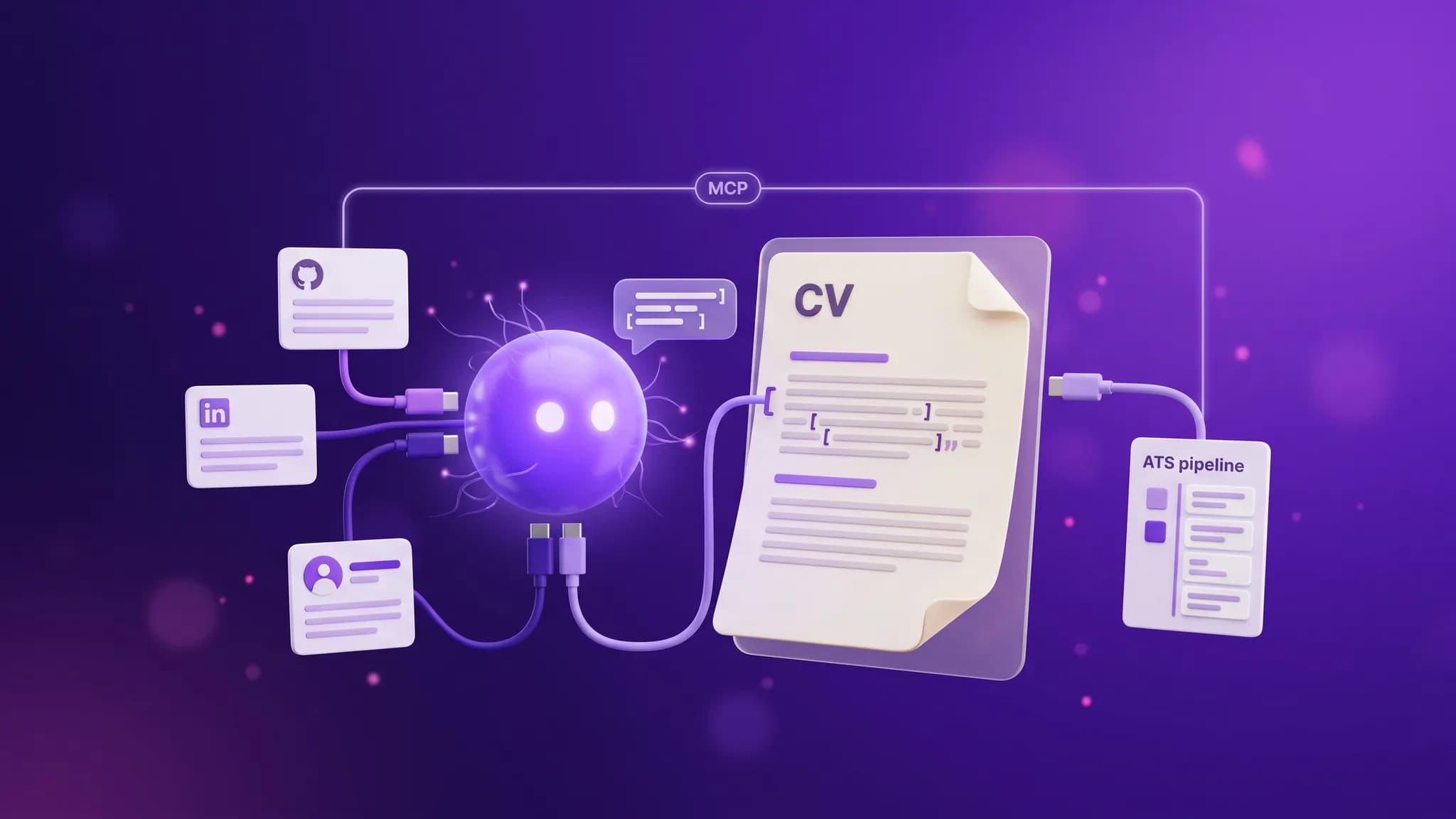

Panorama of major AI recruitment tools in 2026

Non-exhaustive list of tools you'll most often encounter in the EU/US, so you know what's being used if you ask.

- Workday Recruiting (ATS with AI scoring) — used by most large EU/US employers (LVMH, Airbus, Sanofi, Orange in EU; Amazon, Target, Bank of America in US)

- Greenhouse (ATS without default AI scoring but options available) — more common in tech/scaleups

- iCIMS Talent Cloud — large US enterprises

- Lever (ATS) — used by Netflix, Spotify, KPMG

- SmartRecruiters — used by Ikea, Twitter/X, Visa

- HireVue (asynchronous video interview with AI analysis) — 700+ companies including Hilton, Unilever, Goldman Sachs

- Pymetrics / Arctic Shores / AssessFirst (AI gamified psychometric tests) — increasingly used at internship and entry-level roles

- Paradox Olivia / Mya (pre-qualification chatbots) — retail, hospitality, high-volume hiring

- Eightfold AI / Beamery (AI talent marketplace) — especially for internal mobility programs

- Bryq / Plum / Criteria Corp (AI skills and cognitive assessments) — common for graduate programs

Many of these tools are useful and legal when used correctly (information, human oversight, bias audit). The AI Act and US state laws will push vendors to compliance — and you, the candidate, gain the right to verify.

FAQ

Do EU companies really use AI to screen CVs?

Yes, massively. 65% of large EU companies (+250 employees) in 2026, steadily rising since 2022 (APEC / Deloitte barometer). In SMEs, it's rarer but growing. What's deployed ranges from simple ATS parsing (not AI strictly speaking) to full AI scoring on CV-job matching.

Do I need my own DPO to file a complaint?

No. You're not responsible for the processing of your own data — the employer (or the ATS vendor in some cases) is. You can file with the DPA or equality body without a lawyer, without a DPO, for free.

Does the template letter work on a small company?

Yes, GDPR applies to all employers regardless of size. Small companies have less formal processes but the same obligations. In practice, the letter often pushes them to not repeat mistakes — it's a great tool for legal pedagogy.

How long can the company retain my CV?

CNIL recommendation: 2 years maximum after the last contact, unless there's explicit consent for a longer duration (e.g., "talent pool"). Beyond that, it must be deleted. You can request deletion at any time (GDPR Article 17). Similar principles apply under CCPA §1798.105.

Does the AI Act protect better than GDPR?

It adds specific layers for high-risk AI: bias audits, reinforced human oversight, incident reporting, EU registry of deployed systems. GDPR remains the base on personal data itself. The two combine — no need to choose.

What if the company refuses to give me access to my AI scores?

That's a direct GDPR violation (Article 15). File with your DPA — it's one of the simplest cases to process: access refusal is systematically sanctioned. The company must give you the scores or document why it cannot (extremely rare that they actually cannot).

Are external recruitment agencies covered too?

Yes, as data processors of the controller (the client company). You can exercise your rights either with the agency directly or with the hiring company. Both are jointly liable under GDPR.

Can an AI discriminate without anyone knowing (including the employer)?

This is the most frequent and most worrying scenario. Algorithmic biases are sometimes invisible to the humans deploying the tool — which is precisely why the AI Act imposes systematic bias audits (Article 10) and full technical documentation from August 2026. The individual candidate alone can't prove the discrimination: they must file with the DPA or equality body, which can then demand the audits.

If I apply to a US company with an EU branch, which law applies?

If the job is posted by the EU entity or targets an EU candidate, GDPR + AI Act apply. If the job is fully US-based and the processing happens in the US, rely on state law + EEOC guidance. Many multinationals apply GDPR globally as a de facto standard, which simplifies things for candidates.

Takeaways

- On August 2, 2026, the AI Act comes into force for high-risk AI systems — including recruitment tools (Annex III point 4). New rights: information, oversight, explanation.

- You already have 10 enforceable rights in 2026 — GDPR (information, access, rectification, no fully automated decisions, human intervention, objection, erasure) + AI Act (clear explanation, bias audit, oversight). All usable through a template letter.

- 4 major cases have made jurisprudence: Amazon 2018 (sexist tool withdrawn), HireVue 2021 (end of facial analysis), Workday 2024-2026 (1.1M applications class action), CNIL 2023 (€105k fine in France).

- Parallel recourse paths in the EU: national DPA (GDPR/AI Act violations, free), equality body (discrimination, free), labor court (demonstrated damage). In the US: EEOC, state AG, CPPA, NYC DCWP, federal/state courts.

- AI in recruitment isn't the problem — opaque and unsupervised use is. A compliant employer tells you they use AI, explains their criteria, has human oversight, and audits their biases.

The AI Act marks a real shift: for the first time since GDPR, the candidate regains bargaining power in an increasingly dehumanized hiring process. Knowing your rights and knowing how to exercise them has become a career skill in its own right in 2026 — just like writing a CV or preparing for an interview.